HERCOLE Lab will present two papers at XAI 2026 on personalized trustworthy explanations and counterfactual explanations for sequential recommender systems.

Mar 6, 2026

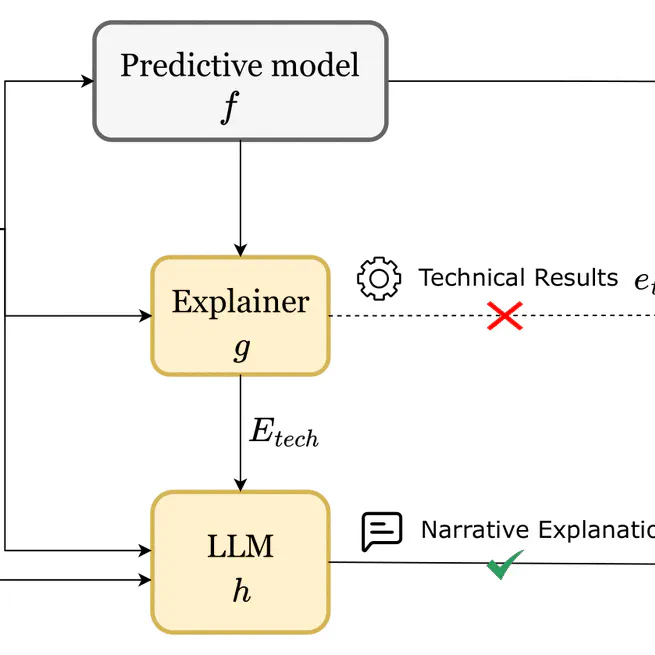

Introduction The field of Explainable Artificial Intelligence (XAI) has flourished, producing a vast array of methods to elucidate the internal mechanisms and decision-making rationales of opaque models. Classical XAI techniques—such as feature attribution, saliency mapping, rule extraction, and counterfactual reasoning—seek to expose the logic underlying a model’s predictions. However, despite their algorithmic sophistication, the outputs of these explainers are often intricate, mathematical, and complex for non-technical stakeholders to interpret. This mismatch between technical transparency and human interpretability remains a central challenge to the practical adoption of explainable AI.

Dec 4, 2025